Research

Publications grouped by research pillar.

★ Representative work * Co-first authors 🏆 Award / nomination

Efficient Generative Modeling

KV caching, sparse attention, and quantization for scalable visual & video autoregressive models.

- AAAI’26

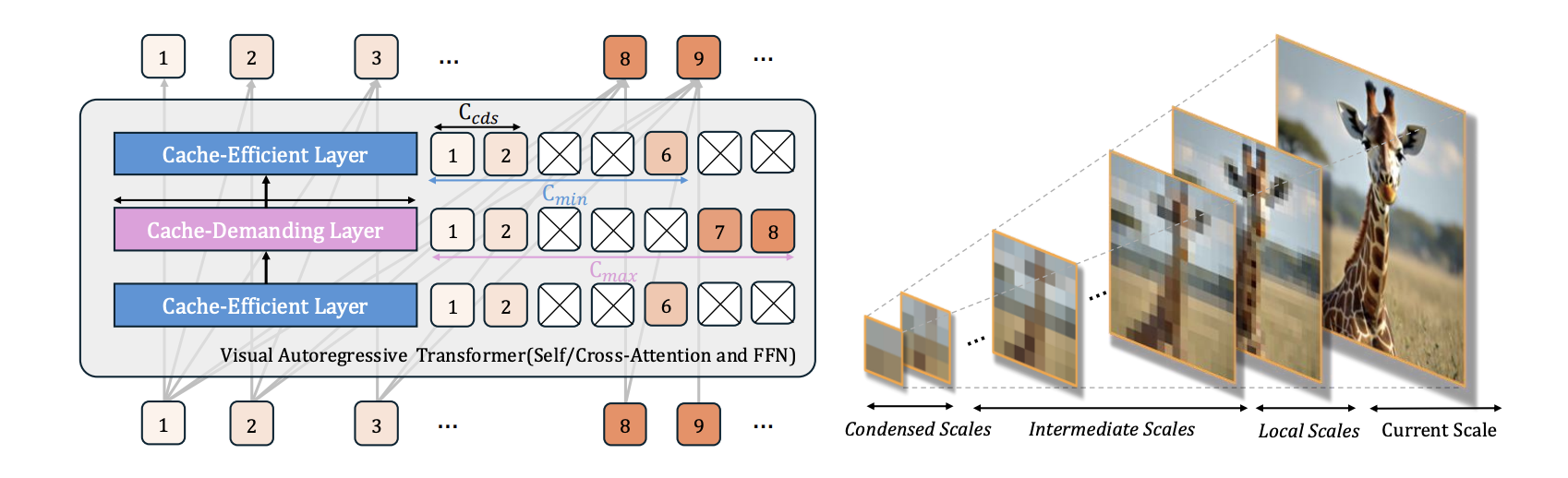

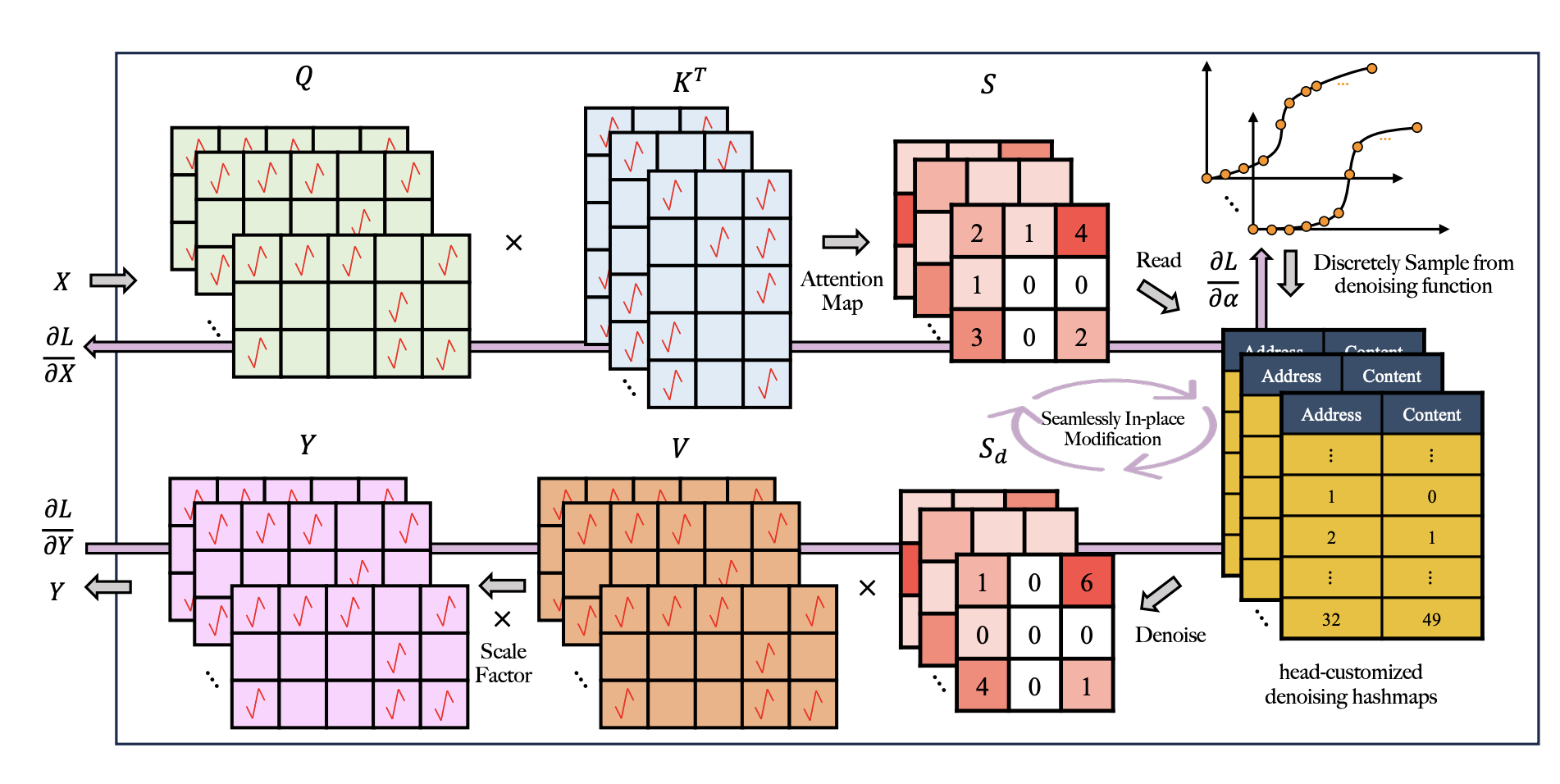

★ AMS-KV: Adaptive KV Caching in Multi-Scale Visual Autoregressive TransformersIn AAAI Conference on Artificial Intelligence (main track)(Acceptance Rate: 17.6%) , 2026First efficient KV-caching design tailored for multi-scale visual AR transformers.

★ AMS-KV: Adaptive KV Caching in Multi-Scale Visual Autoregressive TransformersIn AAAI Conference on Artificial Intelligence (main track)(Acceptance Rate: 17.6%) , 2026First efficient KV-caching design tailored for multi-scale visual AR transformers. - Preprint

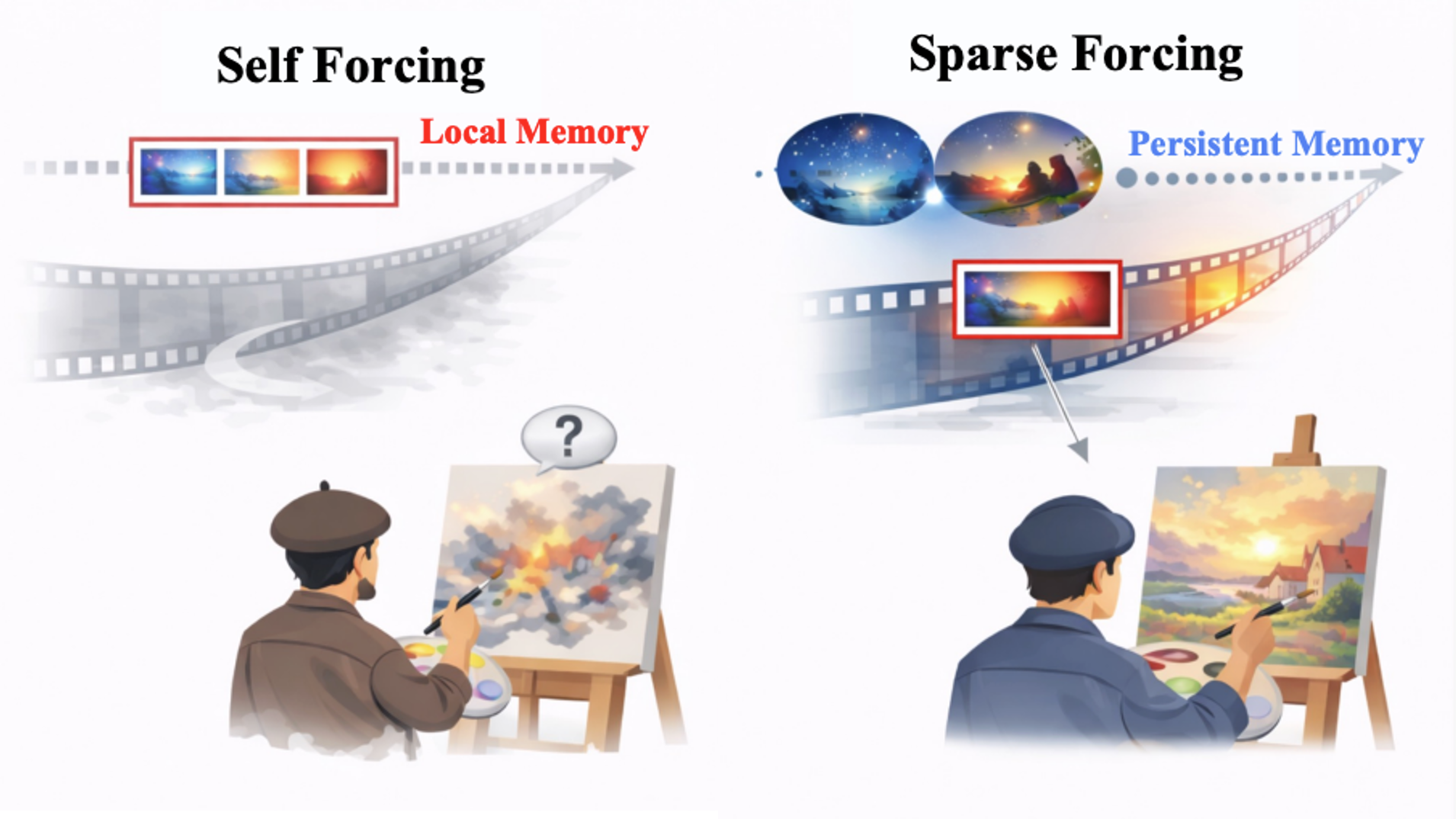

★ Sparse Forcing: Native Trainable Sparse Attention for Real-time Autoregressive Video Generation2025First native trainable sparse-attention framework enabling real-time autoregressive video generation.Work done during internship at Meta Superintelligence Labs.

★ Sparse Forcing: Native Trainable Sparse Attention for Real-time Autoregressive Video Generation2025First native trainable sparse-attention framework enabling real-time autoregressive video generation.Work done during internship at Meta Superintelligence Labs. - ICCV’25

VAR-Q: Tuning-free Quantized KV Caching for Visual Autoregressive ModelsIn IEEE/CVF International Conference on Computer Vision (ICCV) Workshop on Binary and Extreme Quantization for Computer Vision, 2025

VAR-Q: Tuning-free Quantized KV Caching for Visual Autoregressive ModelsIn IEEE/CVF International Conference on Computer Vision (ICCV) Workshop on Binary and Extreme Quantization for Computer Vision, 2025 - CVPR’26

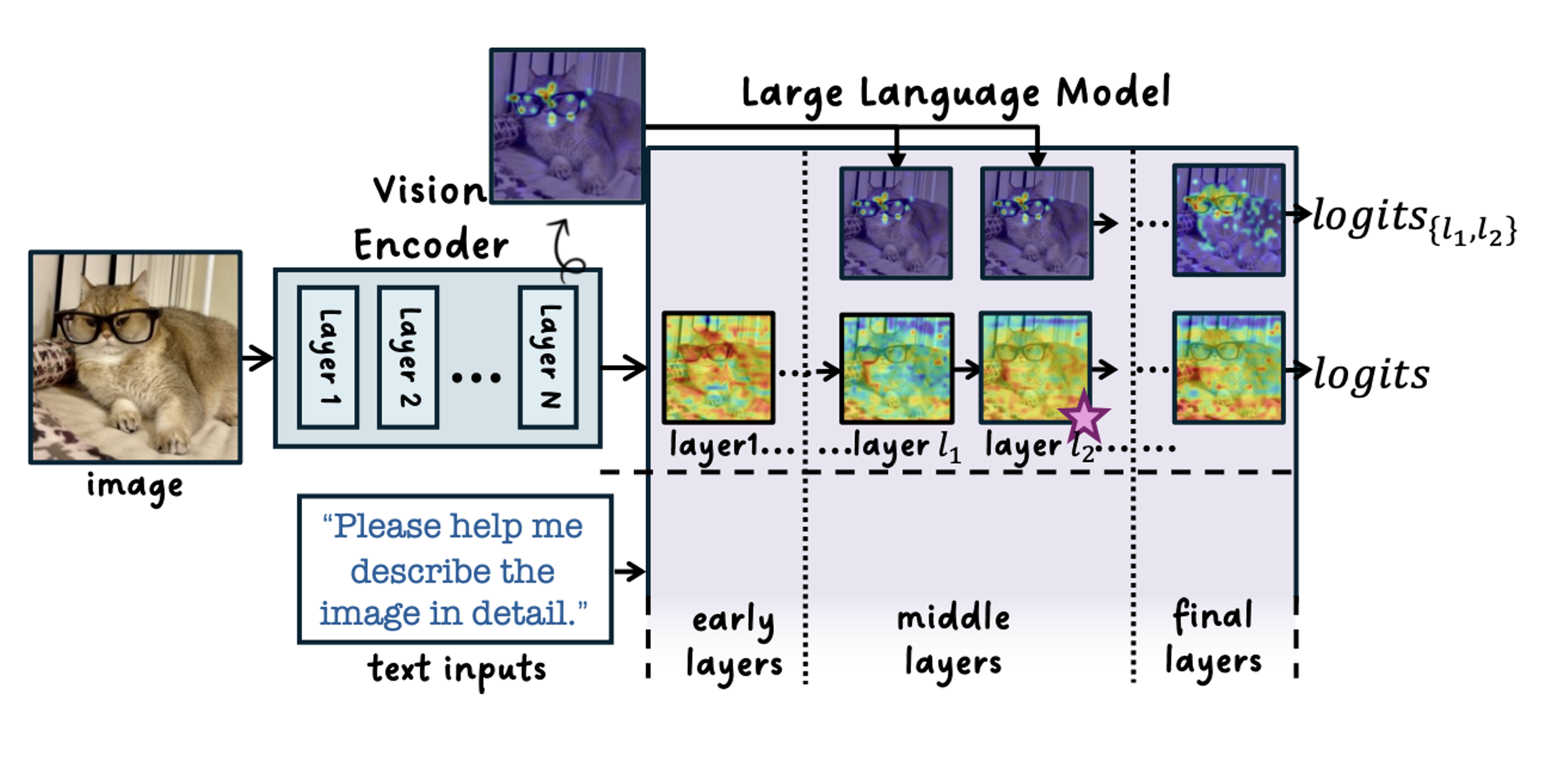

VEGAS: Mitigating Hallucinations in Large Vision-Language Models via Vision-Encoder Attention Guided Adaptive SteeringIn IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Findings, 2026

VEGAS: Mitigating Hallucinations in Large Vision-Language Models via Vision-Encoder Attention Guided Adaptive SteeringIn IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Findings, 2026

Hardware/Algorithm Co-design and EDA

Spiking transformers, 3D accelerators, and LLM-assisted EDA — from algorithm down to silicon.

- ISCA’25

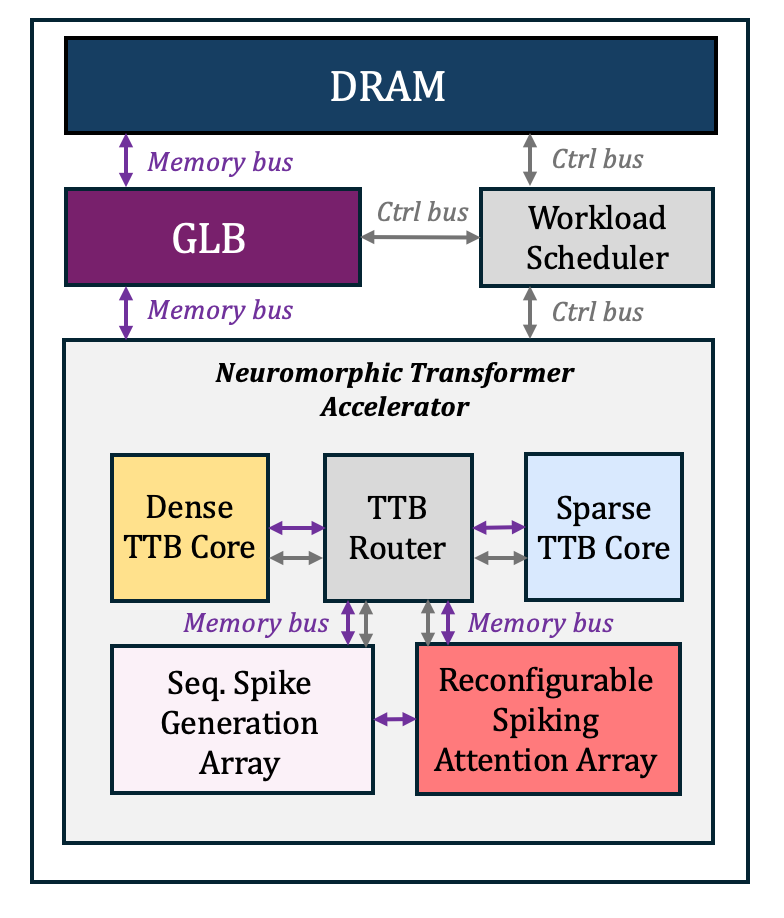

★ Bishop: Sparsified Bundling Spiking Transformers on Heterogeneous Cores with Error-Constrained PruningIn International Symposium on Computer Architecture (ISCA)(Acceptance Rate: 22.2%) , 2025First SW/HW co-design framework for neuromorphic transformers.

★ Bishop: Sparsified Bundling Spiking Transformers on Heterogeneous Cores with Error-Constrained PruningIn International Symposium on Computer Architecture (ISCA)(Acceptance Rate: 22.2%) , 2025First SW/HW co-design framework for neuromorphic transformers. - ICCAD’25

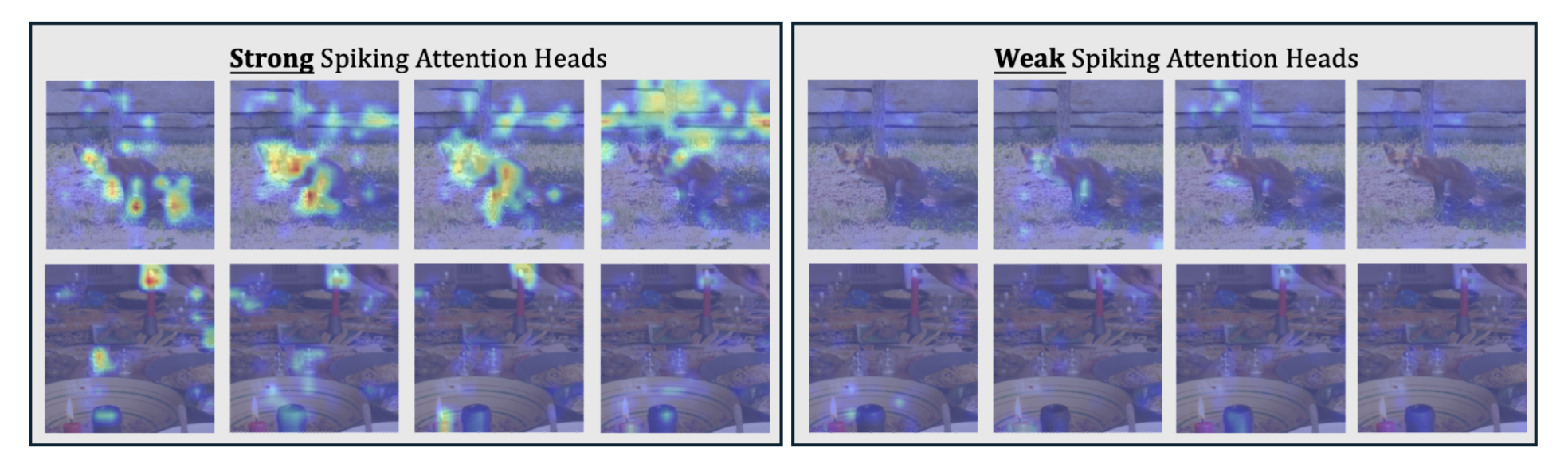

🏆 Nominated as William J. McCalla Best Paper Award in 2025★ 3D Acceleration for Mixture-of-Experts and Multi-Head Attention Spiking Transformers with Dynamic Head PruningIn ACM/IEEE International Conference on Computer-Aided Design (ICCAD)(Acceptance Rate: 24.7%) , 2025First 3D-integrated accelerator for Mixture-of-Experts spiking transformers with dynamic head pruning.

🏆 Nominated as William J. McCalla Best Paper Award in 2025★ 3D Acceleration for Mixture-of-Experts and Multi-Head Attention Spiking Transformers with Dynamic Head PruningIn ACM/IEEE International Conference on Computer-Aided Design (ICCAD)(Acceptance Rate: 24.7%) , 2025First 3D-integrated accelerator for Mixture-of-Experts spiking transformers with dynamic head pruning. - ICCAD’24

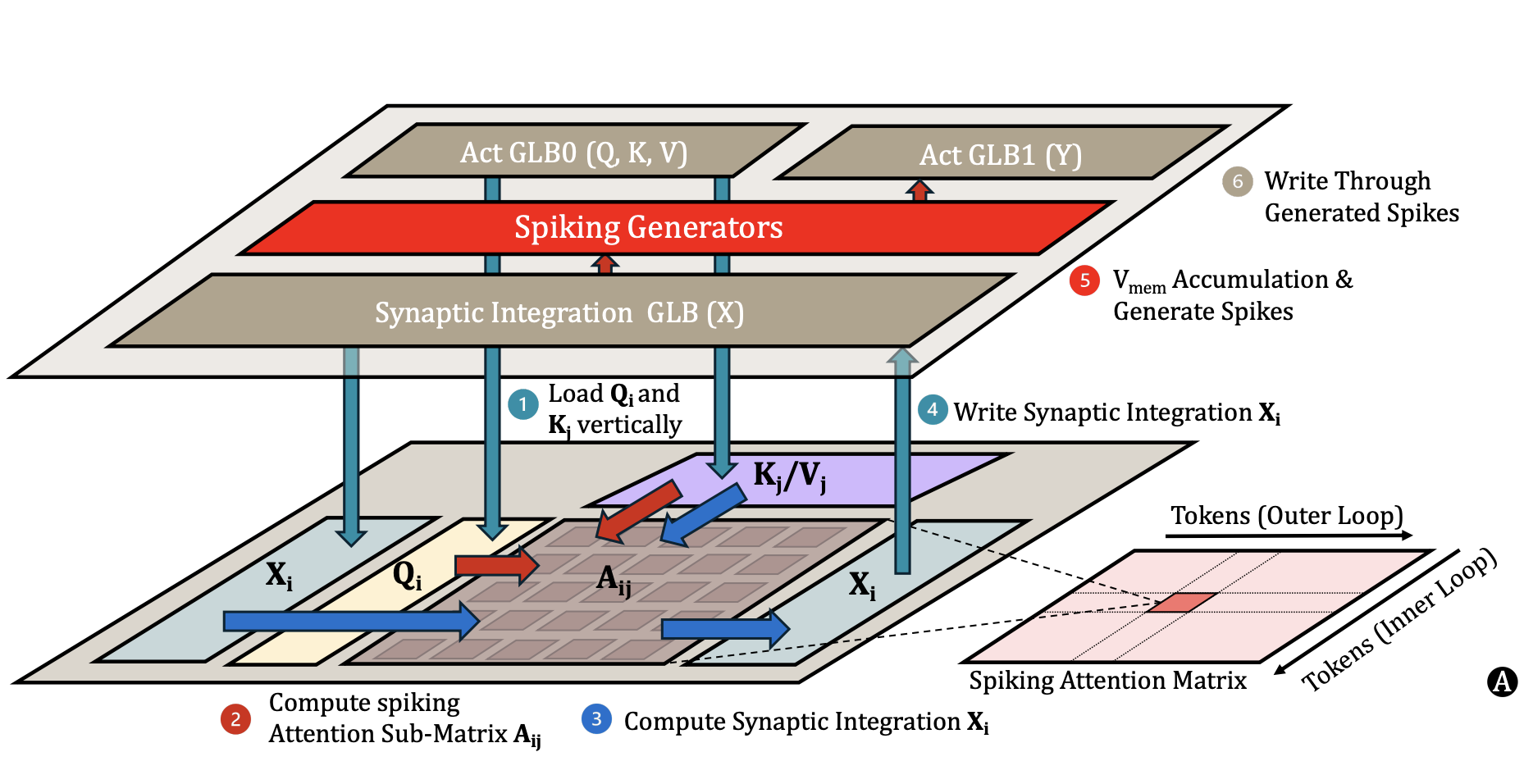

🏆 Nominated as William J. McCalla Best Paper Award in 2024★ Spiking Transformer Hardware Accelerators in 3D IntegrationIn ACM/IEEE International Conference on Computer-Aided Design (ICCAD)(Acceptance Rate: 24%) , 2024First 3D-integrated hardware accelerator for spiking transformers.

🏆 Nominated as William J. McCalla Best Paper Award in 2024★ Spiking Transformer Hardware Accelerators in 3D IntegrationIn ACM/IEEE International Conference on Computer-Aided Design (ICCAD)(Acceptance Rate: 24%) , 2024First 3D-integrated hardware accelerator for spiking transformers. - TCAD’25

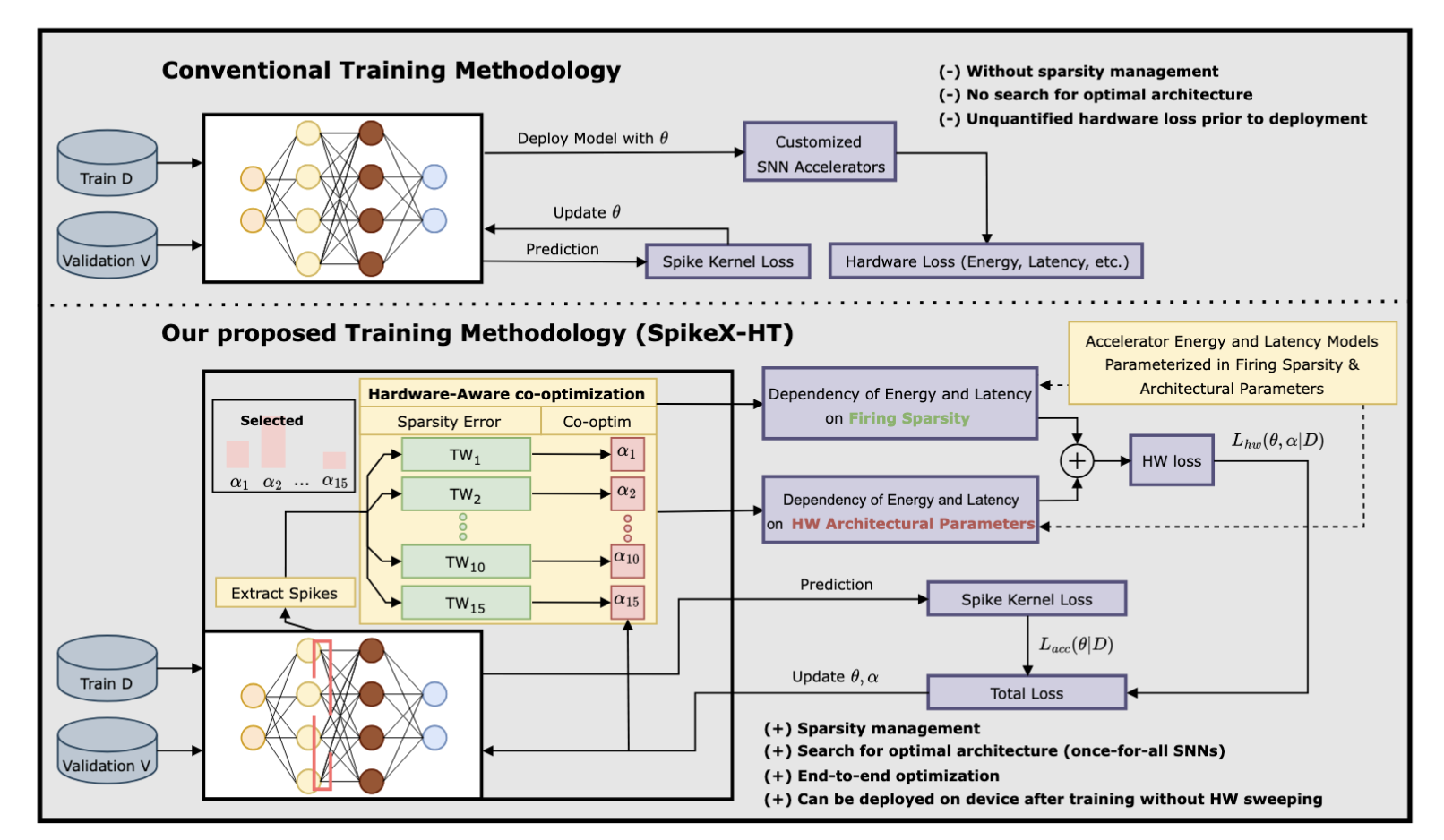

SpikeX: Exploring Accelerator Architecture and Network-Hardware Co-Optimization for Sparse Spiking Neural NetworksIn IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems(TCAD), 2025

SpikeX: Exploring Accelerator Architecture and Network-Hardware Co-Optimization for Sparse Spiking Neural NetworksIn IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems(TCAD), 2025 - ASAP’25

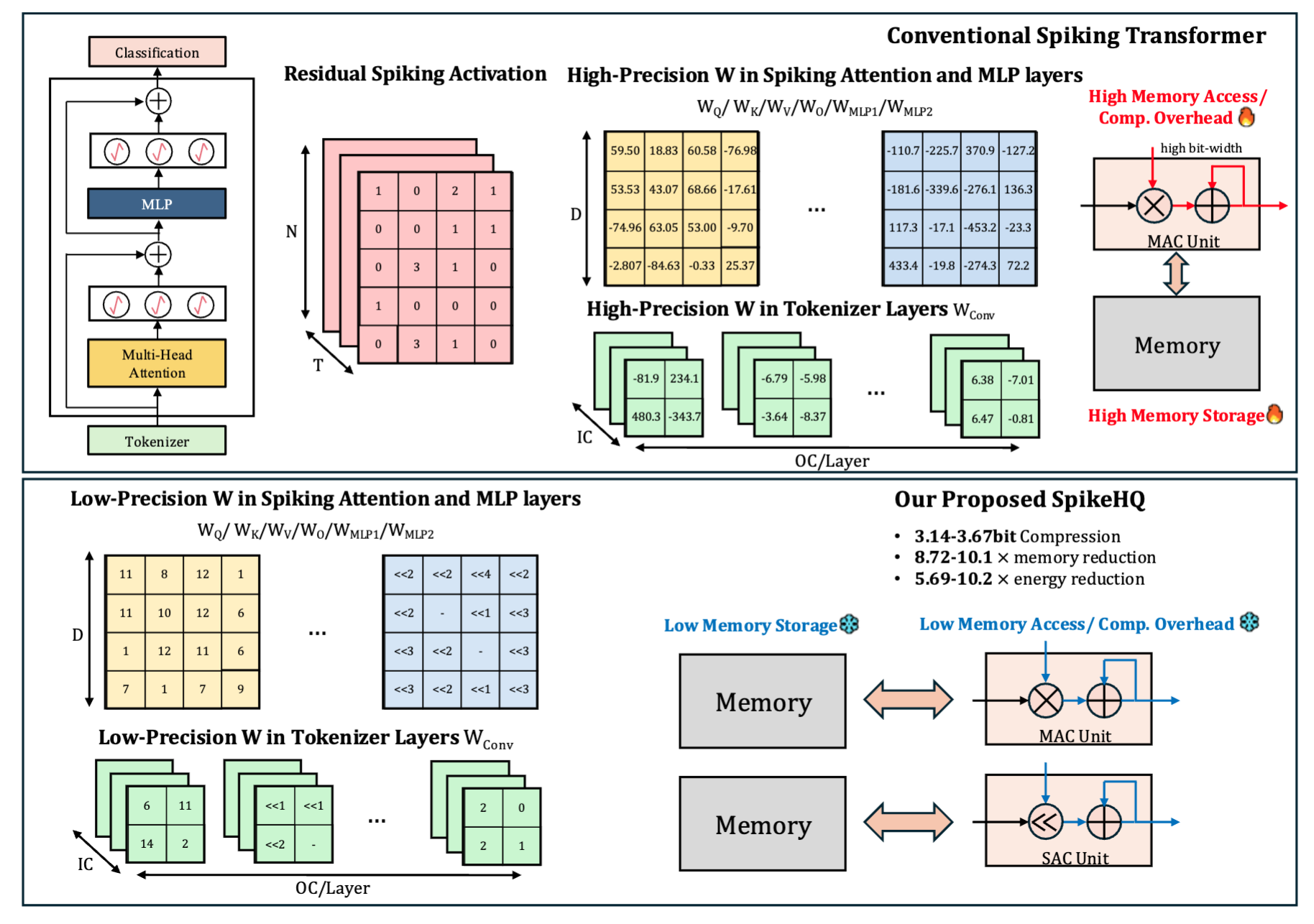

Trimming Down Large Spiking Vision Transformers via Heterogeneous Quantization SearchIn IEEE International Conference on Application-specific Systems, Architectures and Processors (ASAP), 2025

Trimming Down Large Spiking Vision Transformers via Heterogeneous Quantization SearchIn IEEE International Conference on Application-specific Systems, Architectures and Processors (ASAP), 2025 - TMLR

DS2TA: Denoising Spiking Transformer with Attenuated Spatiotemporal AttentionIn Transactions on Machine Learning Research (TMLR, under review), 2024

DS2TA: Denoising Spiking Transformer with Attenuated Spatiotemporal AttentionIn Transactions on Machine Learning Research (TMLR, under review), 2024 - ICCAD’24

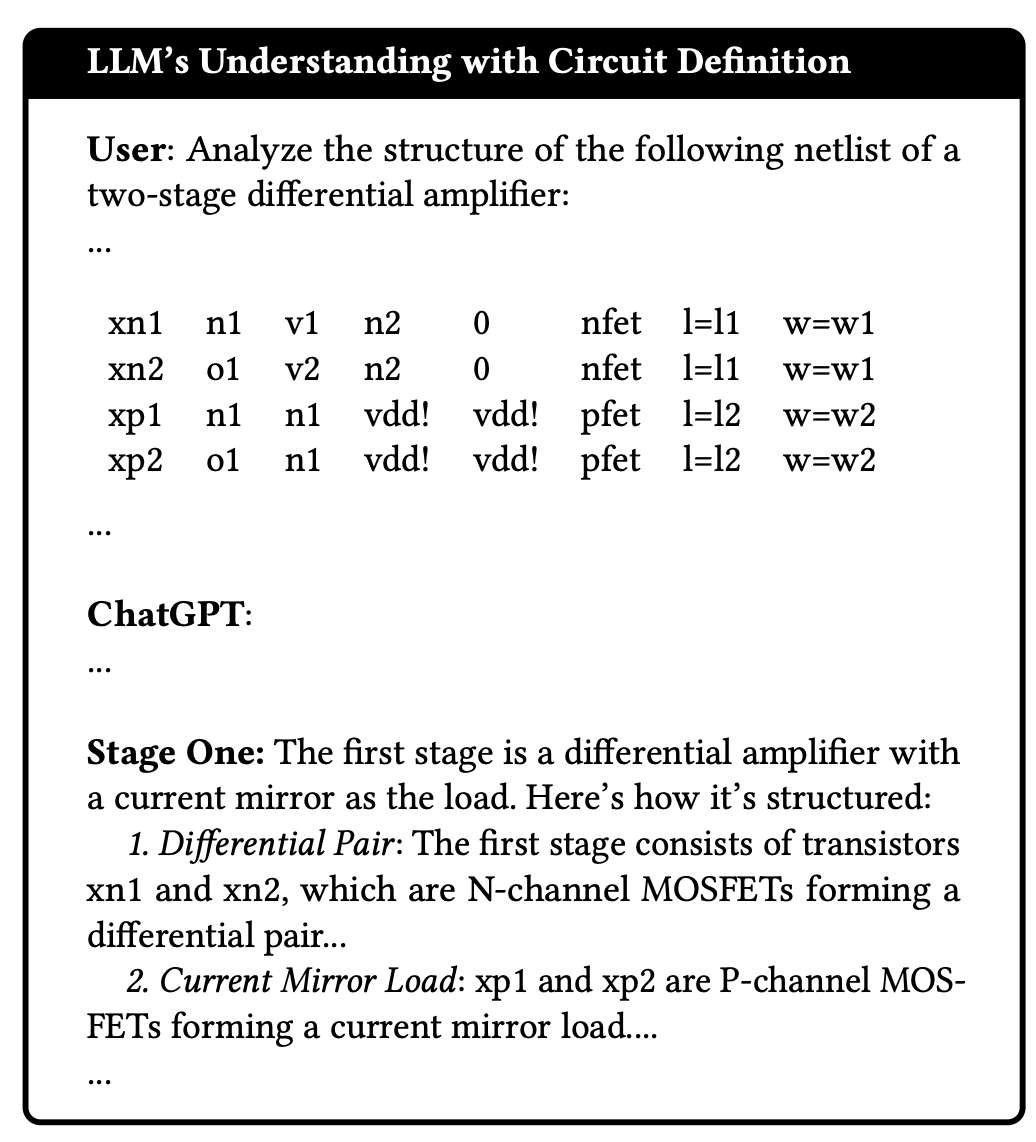

ADO-LLM: Analog Design Bayesian Optimization with In-Context Learning of Large Language ModelsIn ACM/IEEE International Conference on Computer-Aided Design (ICCAD), 2024First work to bring LLMs into analog circuit design, pairing in-context priors with Bayesian optimization for sample-efficient sizing.

ADO-LLM: Analog Design Bayesian Optimization with In-Context Learning of Large Language ModelsIn ACM/IEEE International Conference on Computer-Aided Design (ICCAD), 2024First work to bring LLMs into analog circuit design, pairing in-context priors with Bayesian optimization for sample-efficient sizing. - COLM’26

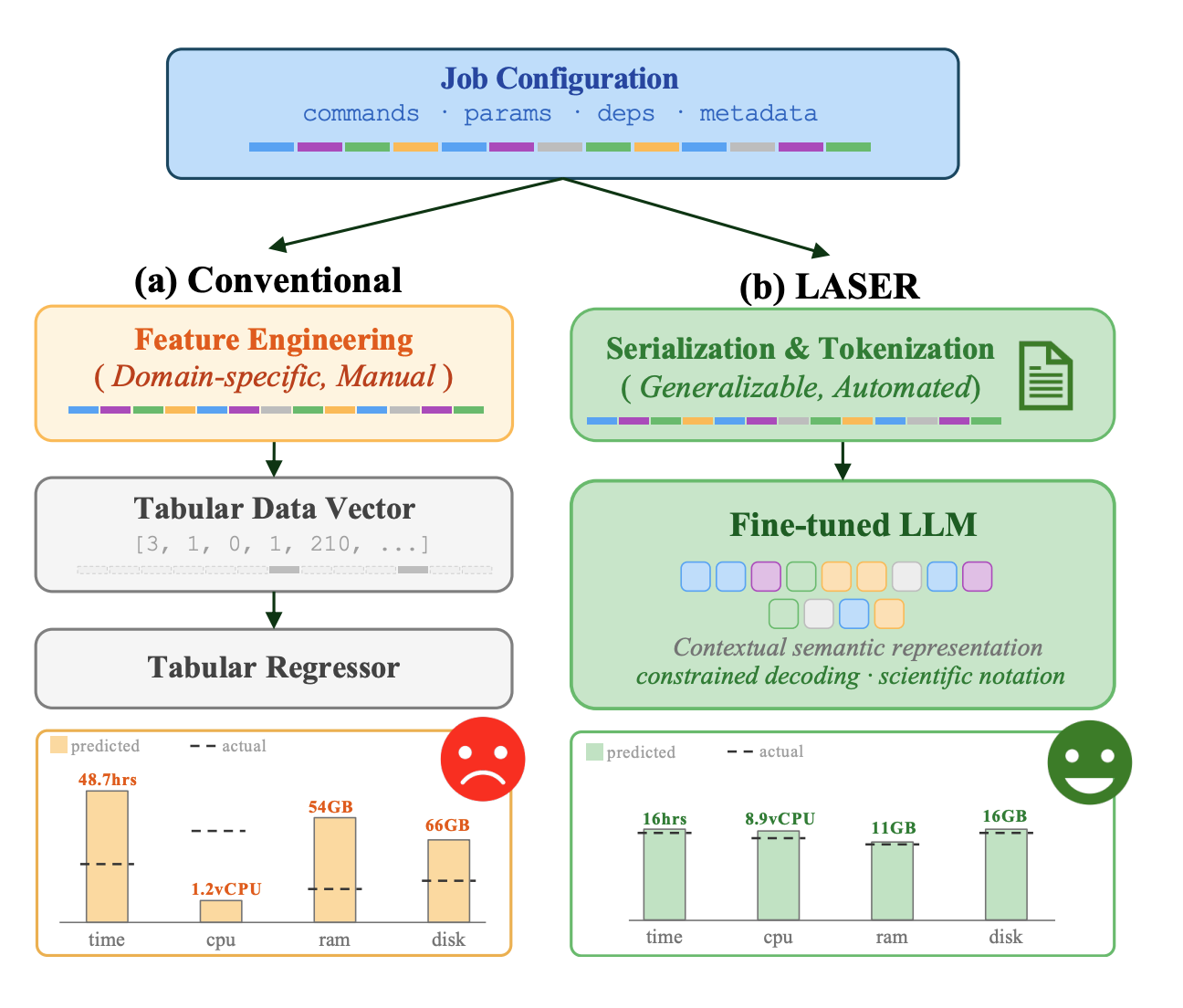

LASER: Language Model Regression for Semi-Structured Workflow Resource and Runtime EstimationIn Conference on Language Modeling (COLM, under review), 2026

LASER: Language Model Regression for Semi-Structured Workflow Resource and Runtime EstimationIn Conference on Language Modeling (COLM, under review), 2026 - ITC’25

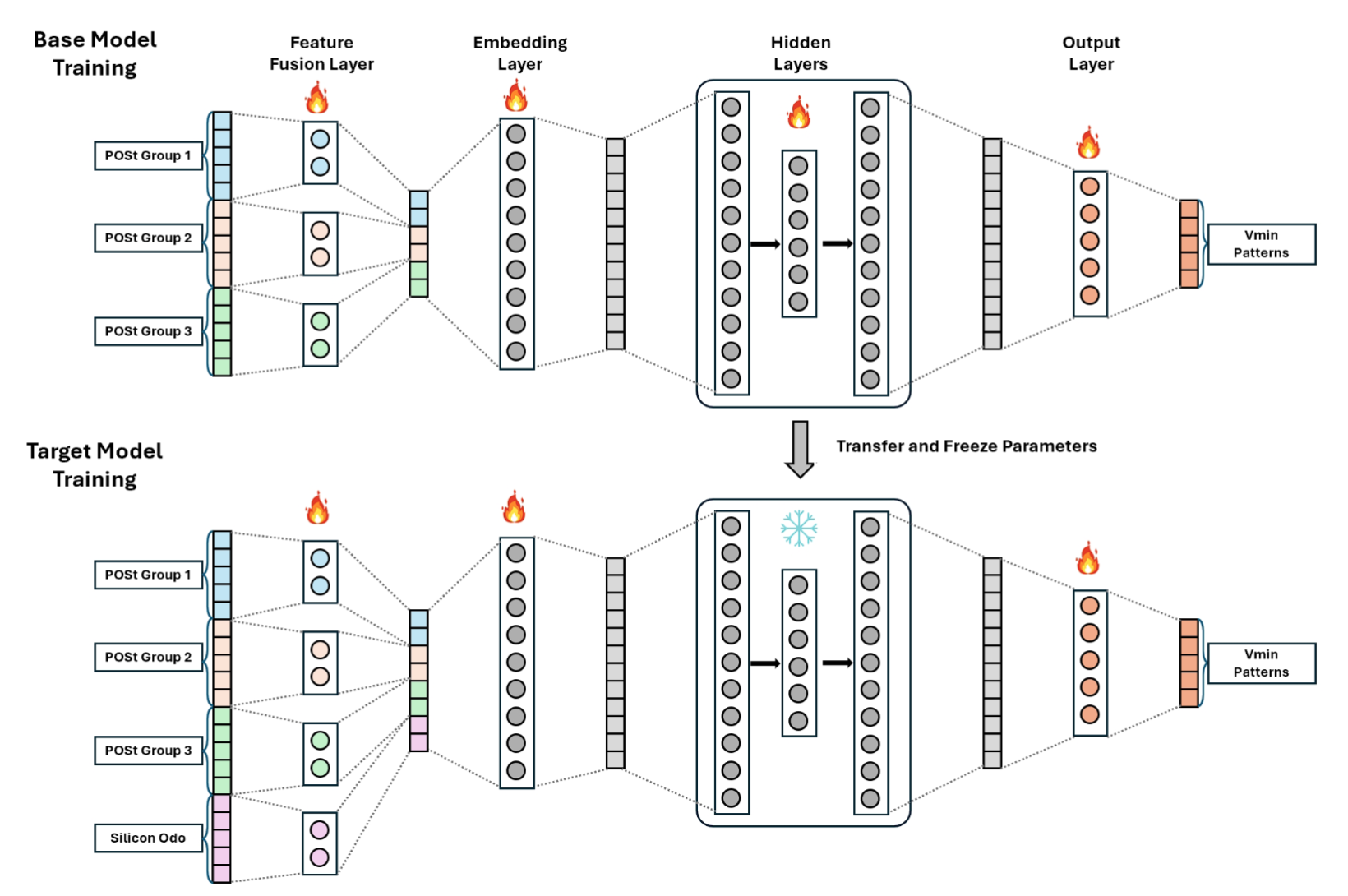

Transfer Learning for Minimum Operating Voltage Prediction in Advanced Technology Nodes: Leveraging Legacy Data and Silicon Odometer SensingIn ACM/IEEE International Test Conference (ITC), 2025

Transfer Learning for Minimum Operating Voltage Prediction in Advanced Technology Nodes: Leveraging Legacy Data and Silicon Odometer SensingIn ACM/IEEE International Test Conference (ITC), 2025 - JSSC’24

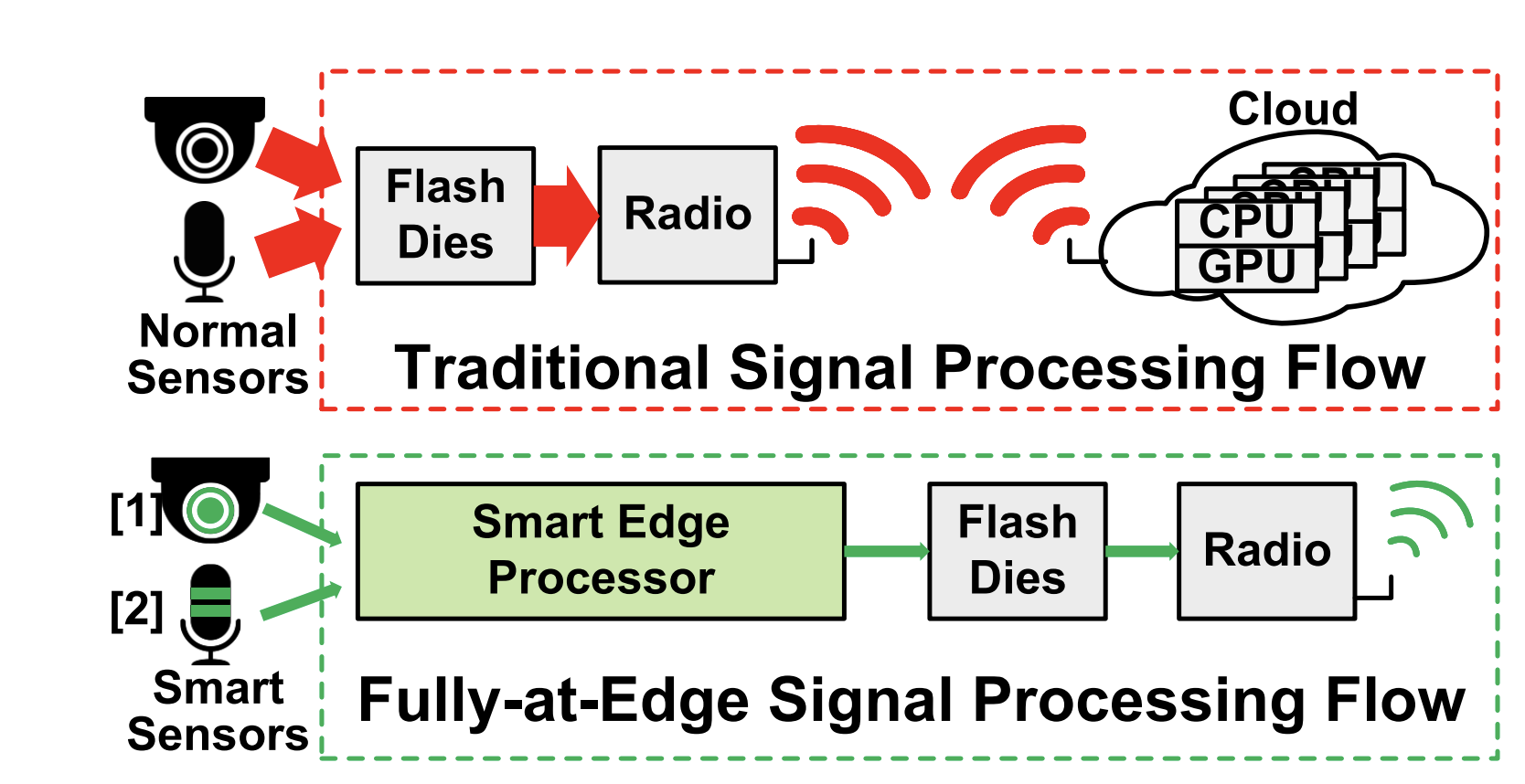

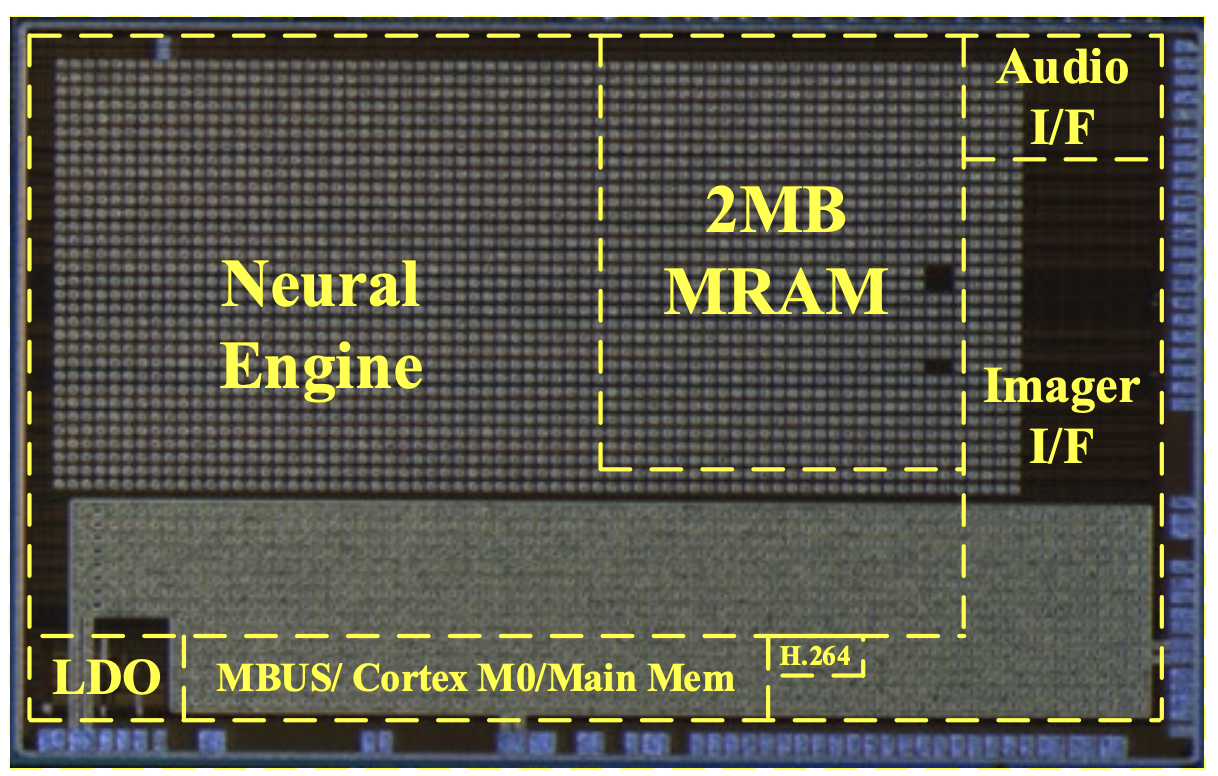

AIMMI: Audio and Image Multi-Modal Intelligence via a Low-Power SoC With 2-MByte On-Chip MRAM for IoT DevicesIn IEEE Journal of Solid-State Circuits(JSSC), 2024

AIMMI: Audio and Image Multi-Modal Intelligence via a Low-Power SoC With 2-MByte On-Chip MRAM for IoT DevicesIn IEEE Journal of Solid-State Circuits(JSSC), 2024 - VLSI’22

Audio and Image Cross-Modal Intelligence via a 10TOPS/W 22nm SoC with Back-Propagation and Dynamic Power GatingIn 2022 IEEE Symposium on VLSI Technology and Circuits (VLSI-Symposium), 2022

Audio and Image Cross-Modal Intelligence via a 10TOPS/W 22nm SoC with Back-Propagation and Dynamic Power GatingIn 2022 IEEE Symposium on VLSI Technology and Circuits (VLSI-Symposium), 2022

Other Publications

- AAAI’26

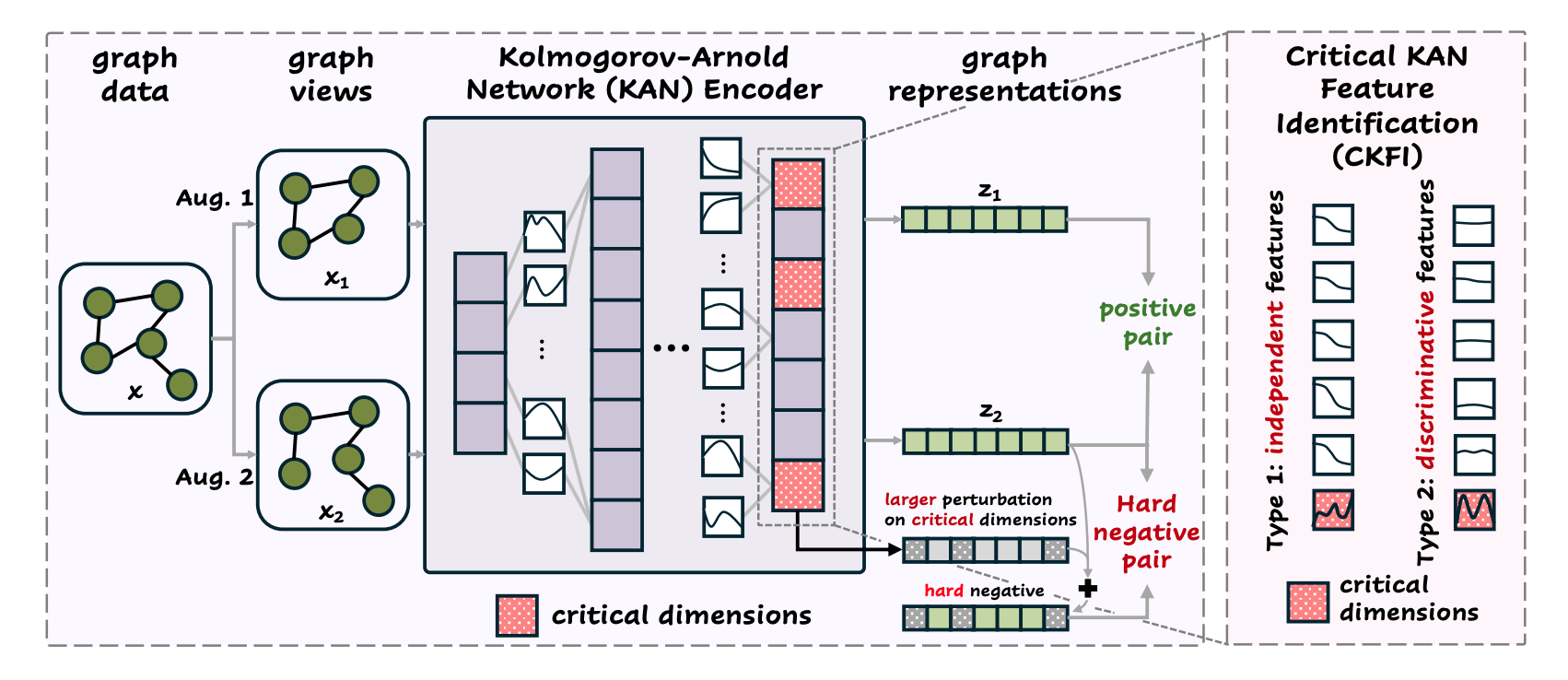

Khan-GCL: Kolmogorov-Arnold Network Based Graph Contrastive Learning with Hard NegativesIn AAAI Conference on Artificial Intelligence (main track)(Acceptance Rate: 17.6%) , 2026

Khan-GCL: Kolmogorov-Arnold Network Based Graph Contrastive Learning with Hard NegativesIn AAAI Conference on Artificial Intelligence (main track)(Acceptance Rate: 17.6%) , 2026